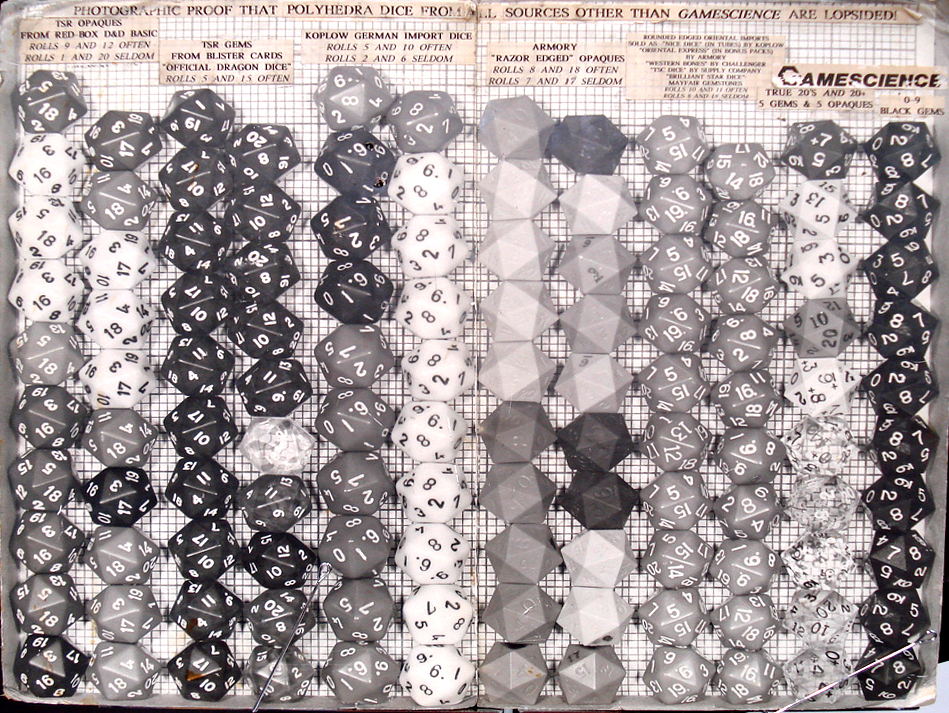

Lou Zocchi is a man who cares deeply about dice. Zocchi’s well practiced speech on on dice quality is famous. It’s fairly entertaining if you have 20 minutes to spare. Part of his argument is the above photograph. Zocchi stacked twenty-sided dice from several companies. Each stack places the same numbers on the top and bottom. For example, one stack might have 1 placed on top of 20 repeatedly, while the next stack might have 9 placed on top of 12 repeatedly. Based on the height of the stacks, it appears that everyone’s dice are irregularly shaped. Everyone’s, except for Zocchi’s.

Did Zocchi pick and choose for best effect? If it was accurate at one point, is it still? I’m pretty sure that the photograph dates to the late 1980s or early 1990s. One of the companies, TSR, hasn’t existed since 1997. Is this still a fair comparison? Eva and I set out to find out.

(Click to see it in all of its obsessive glory. You can also see the monstrous 6,219×1,920 image.)

These are stacks of twenty-sided dice from Crystal Caste, Chessex, Koplow, and GameScience. There are 20 from each company, 10 opaque and 10 translucent or transparent. I lost one of the Chessex translucent dice, so there are only 9 in those stacks. We stacked each set of 10 dice three times, once stacking 20s and 1s, once stacking 12s and 9s, and once stacking 11s and 10s. For the GameScience dice, we also stacked the 14s and 7s, as the 7s have the worst of the flashing.

There is an clear problem with the Crystal Caste dice. We didn’t notice anything odd when we originally purchased them, but their elongated shape is obvious when you know to look for it. Chessex has similar, but less severe, irregularities. Koplow’s dice hold up well in this test, especially the translucent dice. GameScience’s dice are incredibly consistent… except for the 14/7 sides.

There is substance to Zocchi’s claims, although Koplow is a serious challenger. But the photographs are really a publicity stunt, colorful, not rigorous science. So we broke out a digital caliper and measured the distance between every opposite pair of faces for every one of the 79 dice.

Once we had the measurements, I analyzed them. For each individual die I calculated the largest difference between the widths of each pair of sides. Across all of the dice for a given manufacturer, I then calculated the minimum, average, and maximum differences. I also calculated the standard deviation across all of the face pairs across all of the dice for a manufacturer. If a company’s dice are uneven, it will show up here. I broke Crystal Caste into two groups because it became clear that their translucent and opaque dice are wildly different.

Differences in paired face widths by manufacturer in inches

| Company | Min | Avg | Max | StdDev |

|---|---|---|---|---|

| Chessex | 0.014 | 0.020 | 0.027 | 0.010 |

| GameScience | 0.002 | 0.005 | 0.009 | 0.003 |

| Crystal Caste | 0.016 | 0.026 | 0.044 | 0.022 |

| …CC Opaque | 0.016 | 0.017 | 0.021 | 0.006 |

| …CC Translucent | 0.028 | 0.035 | 0.044 | 0.012 |

| Koplow | 0.006 | 0.010 | 0.020 | 0.006 |

The numbers reinforce what is visible in the photograph. Crystal Caste’s translucent dice are the most irregular. Crystal Caste’s opaque and Chessex’s entire line are more uniform, but aren’t in the same class as Koplow and GameScience. Koplow’s dice are very uniform, but GameScience trumps everyone else.

We avoided the flashing on the GameScience dice as it was hard to get repeatable results measuring on the flashing. Based on the stacking test it’s clear that the flashing adds a significant amount of irregularity, so I recommend sanding off the flashing.

I was also interested in seeing how consistent a manufacturer’s dice are. Low consistency suggests uneven manufacturing and makes an entire line of dice suspect. For each pair of faces I calculated the standard deviation across all dice for a manufacturer, then identified the largest value for each manufacturer.

Width standard deviation by manufacturer

| Company | Max | 1-20 | 2-19 | 3-18 | 4-17 | 5-16 | 6-15 | 7-14 | 8-13 | 9-12 | 10-11 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Chessex | 0.014 | 0.014 | 0.010 | 0.006 | 0.006 | 0.006 | 0.006 | 0.010 | 0.009 | 0.008 | 0.007 |

| GameScience | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.003 | 0.002 | 0.003 |

| Crystal Caste | 0.036 | 0.036 | 0.028 | 0.014 | 0.013 | 0.015 | 0.016 | 0.029 | 0.028 | 0.014 | 0.013 |

| …CC Opaque | 0.002 | 0.002 | 0.001 | 0.001 | 0.001 | 0.001 | 0.002 | 0.001 | 0.001 | 0.002 | 0.002 |

| …CC Translucent | 0.006 | 0.006 | 0.003 | 0.003 | 0.005 | 0.004 | 0.003 | 0.004 | 0.006 | 0.002 | 0.004 |

| Koplow | 0.008 | 0.008 | 0.006 | 0.005 | 0.004 | 0.007 | 0.005 | 0.005 | 0.005 | 0.007 | 0.003 |

These results are more surprising. Chessex’s dice are the least consistent. Koplow’s are much more consistent. Crystal Caste’s translucent dice are surprisingly quite consistent. GameScience makes highly consistent dice. The biggest surprise is Crystal Caste’s opaque dice, which were the most consistent. This leads me to conclude that Crystal Caste’s misshapenness is not the result of inconsistent manufacturing processes, nor something as random as being tumbled as Zocchi claims. I believe Crystal Caste has malformed molds or master dice. When Eva bought the dice, she was told that the opaque dice, which we found to be more regular, were from the first few batches of a new set of molds. If Crystal Caste has moved to these new molds, it’s possible that their quality has risen to roughly the same level as Chessex’s.

Clearly the shape of a die impacts how it lands, but it’s hard to say how much it affects the randomness. Sure, GameScience dice seem better than the others, but are Koplow’s random enough for actual play? Determining this requires actually rolling the dice, a task Eva and I plan to undertake in the future. In the meanwhile, check out this fascinating article suggesting that GameScience’s dice are measurably more random than Chessex’s… except when it comes to rolling 14, the side opposite the flashing! There is also some good research on showing a distressing bias toward 1 on Chessex and Games Workshop six-sided dice.

Added 2013-02-26: Matthew J. Neagley has done some more analysis of our measurements over at Gnome Stew.

For myself, I was impressed with Koplow’s dice, but they fail to arrange the numbers so that the sum of opposites sides equals the largest number on the die plus one. That won’t bother some people, but it drives me crazy. I foresee more GameScience dice in my future.

Raw Data and Results

Methodology

The Dice

Eva purchased the dice in 2009, directly from each company’s booth at Gen Con. She attempted to get an assortment of colors to avoid bias from a single batch of dice. Eva told each company about our plans to measure the dice and asked if there were particular dice they wanted us to use. They uniformly said to choose whichever dice she liked. The GameScience staff reminded her to stack them on the side with the flashing as well.

The dice are unmodified and have not been subjected to significant wear and tear since their purchase. We have not used them for any games. They spent most of their life sitting in plastic baggies in a storage tub on a shelf.. We left the flashing on the GameScience dice.

The Photographs

I built a measurement framework to hold the dice out of precision engineered Danish scientific aparatus: Legos. The framework has uneven legs so that it leans backward, simplifying stacking dice. The framework has two “grooves” into which dice can be stacked.

I built a measurement framework to hold the dice out of precision engineered Danish scientific aparatus: Legos. The framework has uneven legs so that it leans backward, simplifying stacking dice. The framework has two “grooves” into which dice can be stacked.

I mounted the camera onto a tripod pointed into the corner of a built-in shelf. I pushed a blue Lego baseplate with a smooth center areas into the corner. I placed the framework onto the smooth center area and pushed it into the corner where it was stopped by the Lego studs at the end. By pushing the blue plate into the corner of the shelf and the framework into the corner of the plate, I could ensure consistent placement between photographs.

I put sets of dice into each groove, 10 at a time (9 for the Chessex translucent), stacking so that a given pair of numbers was always on the tops and bottoms: 20/1, 12/9, 11/10, and for GameScience only 14/7. Koplow dice are numbered differently, there is no 12/9 pairing, instead I stacked 12/2.

I stacked the dice so that the triangle of the face on top roughly aligned with the triangle on the face touching it, causing the dice to alternate in facing. I marked each stack with a label sitting at the bottom of the framework. (You can see the labels, a bit of pink, at the bottom of some of the stacks.) I repeated for each pair of sides. The same dice are used in each case, but the order of the dice was not preserved. I ordered the dice using the scientific principle of “whatever I happened to grab,” with the exception of striving to ensure that the top most die was reasonably visible against the background.

I loaded the photographs into the GNU Image Manipulation Program, sliced the photographs into individual stacks, and grouped the stacks by manufacturer and translucency. I used the top and bottom edges of Legos at the top and bottom of the framework for alignment. No scaling was done. Because the groves in the framework were very close, edges of the adjacent stack are visible in the final image.

The Measurements

Eva and I measured the dice using Wixey Digital calipers model wr100, accurate to a thousandth of an inch. In the case of the 7 face on the GameScience dice, the face with worst flashing, we avoided the flashing as much as we could to ensure repeatable results.

Great job! Do you think if the flashing was sanded first the GameS would be a much clearer winner?

Another test of GS that needs to be done is their destructibility. Zocchi used to claim his dice would outlast any other in tests of strength and durability because of the higher grade of plastic used. Bang with hammer, drop from heights, etc. would be interesting to see!

Based on our measurements, I’m confident that they would stack very evenly.

Not sure I could bring myself to abuse the poor dice as you recommend. 🙂

I ran destructibility tests on Game Science dice with a concrete floor and a 12 oz claw hammer.

None of the contestants ever won a free GS Gem die by smashing or even denting the GS opaque die used in the tests! I also managed to shatter a GS Zocchihedron (D100) the first time I saw one, by trying to repeat the same test when I went to Pacific Origins to help Lou with his booth! Strangely, it survived the first two blows, before Lou told me not to try that again – “They’re hollow, not solid cast, Major!”

I started gaming in 1977 and all my early dice came from boxed sets of whatever game I had bought. I could tell that they were crap. I finally got the change to by new dice and my FLGS recommended GameScience, so I bought a 2 “sets”. I spent 20 minutes picking matching colored pairs of each die size so I would have a full set of dice that would be quick to use at the table. I also got extra d6s a blue and red set of d10s for percentile dice and 2 extra d20s. Over the years I have added 3 more full sets of GameScience to my dice bag.

I also have about 1000+ dice of other types, from every manufacturer made from many different substances. I will periodically get a new set and begin using them, putting my GameScience dice away “for good”, only to find myself going back to them again an again. I could not tell why I preferred them, and could only defend my continued use by saying I liked the way the sounded when they hit the table. You have given me a great gift. I can now say that while I intend to keep “collecting” dice (cuz they are cool) I will never again forsake my trusty GameScience pals.

Game Science dice used to be great. Now they are absolute crap. Every set I’ve looked at are marred with huge mold imperfections that, at times, make the dice illegible and un-inkable.

Zocchi’s original dice specifications were wonderful. however, the people running Game Science now don’t seem to care one bit and are selling a VERY inferior product.

@Bob

If inking and readability are your main concerns, you could “fill” the illegible numbers with paint of a color similar the the die body then pick out the numbers properly with a contrasting color. With the better faces, you can simply fill them with the contrasting ink. This is more work, but not too much more compared to inking dice in the first place.

If your concerns are also that these voids create fair rolling issues, we’ll have to wait for Alan’s next article to address those issues.

Hi Bob,

I had one problem with a D20 from Game Science that was deformed (wouldn’t sit flat) and when I brought it to Game Science’s attention they replaced it without question. Have you tried contacting the company with your concerns? It’s possible you ran into a batch of genuinely defective dice and if that’s the case they’ll almost certainly want to know about it. 🙂

I have two complete sets of GameScience dice, and both have some terrible flaws: crests, bubbles, and one of the D4s have a huge lump in one side. I don’t expect this from a $35 dice set.

There were a few years where quality went downhill. The story I heard, but cannot verify, is that the original manufacturer (not Zocchi!) was a small operation, basically one guy, and that he was getting old and having difficulties. I have a few dice from that era, and they aren’t so good. Since then GameScience has moved to a new manufacturer and quality should be better. The twenty dice purchased for this research all seem pretty good to me. I’m told by my wife and others that GameScience is quite good about replacing flawed dice.

When we did our 10,000 roll test of GameScience vs Chessex it’s true that GameScience rolled more close to random (other than the 7/14 sides) but there are two important things to note: *neither* rolled truly random — even without the 7/14 sides the GameScience dice rolled outside the margin of error; and even for the worse sides on the Chessex dice you’d need to roll it thousands of times before you’d see any difference (and that difference would be one number coming up a few times more than it should have).

@Brian

Sounds like you fell into the “curse of significance”. That is, the larger your sample size, the more exactly you have to match a potential distribution to not reject a match. So eventually you hit a point where you’re “cursed” to always reject your hypothesis. This is one of the reasons why some statisticians favor visual interpretation of plots to strict tests, though there are good arguments on both sides of the fence.

P.S. this is also sometimes called being “Doomed to Significance” I love the terms because they have a very fun flavor in what can occasionally be a dry science.

Dunno — but the smaller your sample size, the less likely you are to have significant results. Personally, I love big sample sizes, ’cause they give good data.

Well, the issue is that NO die is ever going to give a perfectly uniform distribution, which is provable in both the hand-waving sense and in the mathematical sense.

In the mathematical sense: die production can be thought of as a process that creates a random vector of 20 probabilities that add to 1. Thought of this way, it is a continuous random variable with an infinite number of possible outcomes. As such, the probability that any given specific outcome is produced is always 0. Instead only ranges of outcomes have positive probability of being observed. Thus, the probability of a die having the outcome of being fair, which is a specific single outcome, is 0 and will always be 0 no matter how perfect your manufacturing processes are.

So NO die is fair and testing them “for fairness” is kind of pointless. The issue is instead if their deviation from fairness is detectable within n rolls. With 10,000 rolls it’s almost guaranteed that you’re going to reject fairness but that’s not useful because we don’t want a test to see if they are fair, we want a test that checks if they’re reasonably fair within, for example, a play session, not a black box that just always says reject which while 100% accurate is of no help in making decisions.

This just doesn’t apply to dice by the way. It applies to many similar problems which is why the current statistical fad of using “big data” (huge compiled databases of customer data and the like) is kind of troubling.

We aren’t trying to see if a specific distribution is rolled (and the probability is of that is never 0). We can apply mathematical tests to determine the range of results that a fair die will produce. The more samples (rolls) we have, the smaller that range becomes and the easier it is to tell both the probability that a die is rolling fairly, and, if it’s not fair, how unfairly it’s rolling.

Casinos, in fact, use this to spot check to see if loaded or unfair dice are being used on the tables.

Can’t reply to your later reply for some reason so I’m backing up the chain a bit.

You say “We aren’t trying to see if a specific distribution is rolled” and then you say “The more samples (rolls) we have, … the easier it is to tell … the probability that a die is rolling fairly…”

What I’m saying is that those are the same thing. Saying a die is “fair” is saying that it rolls with a single specific probability distribution, specifically f(x) = 1/(# sides) for all sides.

What I then go on to say is that since NO die rolls fairly, and you are correct in saying “The more samples (rolls) we have, … the easier it is to tell … the probability that a die is rolling fairly…” that you will NEVER find a fair die with 10,000 rolls. At that point, the power of your test is too great to ever NOT reject any die, because they are all imperfect.

Now when you say “We can apply mathematical tests to determine the range of results that a fair die will produce.” you are partially correct. There is no need for mathematical tests to determine the range of results a fair die will produce. A fair die will produce any string of results of any length provided each of the results within the string are valid results(numbers that can be rolled).

What you mean to say is that you can apply mathematical tests to determine the range of results that are LIKELY to be produced by a fair die. This difference is that “1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1” is a result that will be produced by a fair die, but very rarely.

(as an aside, this difference is why in most hypothesis test situations alpha is equal to your chance of rejecting H0 in error, ie: type 1 error )

You’re right that Casinos use goodness of fit tests to test their dice, but this does not make casino dice fair (like all other dice they are unfair, just less so) nor do casinos use 10,000 rolls to test dice in this way since it would result in them finding all of their dice unfair (and throwing them all away).

For the width measurements, did you measure from the centres of the faces or from across the edges? I have several Gamescience d6s that are so concave that the centres of the faces sit up to 2mm below what the plane of the face ‘should’ be…

None of the dice we tested (from any manufacturers) had obvious concavities in the sides. I wasn’t particularly trying to avoid faulty casting when I picked out dice for this. None of the dice I was offered that Gen Con appeared to have this problem (and I was buying out of the color bins of d20’s at the manufacturer’s booths).

The calipers we were using are of a style that has a clamp-like flat section you close to measure the object (similar to these: http://en.wikipedia.org/wiki/File:DigitalCaliperEuro.jpg ). I placed the flat edge of the calipers directly across the faces of a die when I measured it (so it passed over both edges and the center over the numbers). Because of the caliper shape, if a die was concave or convex the calipers would have rested on whichever part of the surface was higher. The only time when I deviated from this procedure was to avoid the flashing on the Game Science dice. I measured across the center of the die, but I turned the die as needed to avoid the bulk of the flashing.

A few thoughts:

1. I’ve been interested in this topic, and am working on an automatic die roller to help measure the empirical effect of these distortions. Link with video is above; I’ve now added a camera taking photos of the dice, and custom OCR software to read the numbers, let a human quickly proof the recognition, and compute various statistical fairness tests. My tests are generally running 6,000 rolls of d6s, and comparing ancient generic dice with Chessex dice and with casino dice, which I gather are highly regulated by the Feds. (conclusion: casino dice are definitely fairer)

2. I agree with Mr. Neagley – The idea that no die is statistically fair or that big data sets are harmful is, I think, missing the point a bit. Any statistical test has a confidence interval, and you can set your confidence intervals appropriately to get the desired false positive and false negative rates. You can never prove that a die is fair or unfair with experimental data, but you can state the probability that a fair die will get rejected as unfair. I do agree that graphs are helpful to get a visual sense of “how bad” a deviation is.

3. I love this line of inquiry, and obviously I’ve also spent a lot of time on it myself, but I also have to admit that in RPGs, dice are basically there to add a bit of randomness in ways that make the game more fun. Zocchi’s rant about player characters getting killed because of bad dice misses the point that player characters might also be helped by bad dice – and that demanding precision in your dice pretends that the tables and rules you’re using are exactly fair and precise. Does banded armor really give exactly 5% more protection, statistically, than leather armor (or whatever your system says)? You could really have a substantially nonlinear distribution for a given die and it wouldn’t really change the game.

Not that it isn’t still a fascinating problem! 🙂

And – don’t we all tend to have favorite, “lucky” dice? What if you knew for sure that your lucky die was unfair? Should you stop using it??

Regarding point 3, hopefully the game’s designer had a goal, and created mechanics that achieve that goal as closely as practical given reasonably fair dice. If your dice aren’t reasonably fair, the results stop being what the designer expected, the mechanics stop working as the designer expected, and the game stops working as the designer expected. So, not good.

I can’t (yet) speak to a d20, but research on d6s suggests a serious problem; 29% of rolls coming up 1 is wildly different than the expected 16.6%. This research was on Chessex and Games Workshop dice. As a user of Chessex dice for several games (because it’s the cost effective way to get fistfuls of d6s), this worries me.

Take Mythender, a game tuned around very specific math, and for which I’m using Chessex dice. Mythender encourages the GM to play hard. A significant bias toward 1 is going to make the game much more difficult for the PCs. The introductory scenario (which I run frequently), is tuned to be exciting, but strongly biased toward a PC win; that tuning is in danger if I can’t trust my dice. And Mythender has a sort of built-in expiration date for PCs; if your dice err toward 1s, PCs are going to have a much shorter career than intended.

Or take Fiasco. Fiasco uses the dice to generate a flat set of results for picking story elements. If the dice are biased toward 1, we’ll be using the story elements that happened to be numbered 1 more than other elements. Repeated play will feel repetitive more quickly, a real shame given that the random story elements are specifically designed to create replayability.

Or for something a bit closer to traditional, take Dungeon World. The GM in Dungeon World never rolls the dice, and low results on 2d6 are bad for PCs. While failure is interesting (especially in Dungeon World), too much failure stops being interesting and can literally kill your character.

Asserted: Rules matter. (That assertion is its own argument. 🙂 ) Rules are (frequently) built on the assumption of reasonably fair dice. Thus, fair dice matter.

I do agree – 29% vs. 16.6% is a lot, and may end up changing some games perceptibly. I think it’s more noticeable on d6s than d20s, because they have fewer sides to choose from (if your 11 comes up 6% of the time on a d20 rather than 5%, it’s not as huge a deal).

For many games, it’s not the specific value you roll that’s important, so much as the cumulative histogram – you’re not trying to get a particular number, you’re trying to get a target number or higher – and so small deviations may get balanced out by others. If 11 rolls more often and 13 rolls less often, e.g., you don’t really care unless your target number is 12 or 13 – it makes no difference when you’re trying to beat any other number.

I don’t have as much data as the study above, just… lemme see… 14 runs of 6,000 rolls per die for various dice. (I have no undergrads to flog.) But FWIW, I don’t see a pattern of always getting 1s from all dice. The pipped Chessex dice that I used, for example, were “reasonably” fair, and their most-frequent faces were 4, 5, and 6. The generic, no-name dice I had around that failed multiple fairness tests tended to roll high as well. So I wonder if there was a procedural difference, or just a difference in the dice.

But I’d still say the linkage between fair dice and entertaining games depends a lot on the game, and the group. Games with heavy use of d6 and a lot of dire, non-GM-mediated results do seem like they might be vulnerable. Fiasco seems like it’s on the other end of the spectrum – die rolls are really there just to suggest story element choices you might not make on your own or free you from having to choose. There’s nothing stopping you from renumbering the story elements in the charts, if certain ones come up too often, or just replacing them with something new that the players agree on.

Anyway, it’s fun to ponder.

When I wrote the article “Is your lucky die fair?” which dealt with running Chi square tests on your dice rolls, the very first comment I got was “I want to test my dice… and I am afraid to test my dice.”

There is definitely a sense of “magic” in dice that stats kills.

Pingback: Do Gooder Press - The new Pope and other things…

I’d be curious to see how “Metal” dice stack up. I just purchased a set of steel and copper dice for the novelty effect, but I’m now wondering how well made they are. Buying a stack of 10d20’s is no small economic feat for a test, but if you are looking for something later to update your comparison site, there you go.

Pingback: Analysis of DeSmet’s Dice Measurement Data Set | Sigma Data Consulting

I’d like to make contact with timothy webber and learn about his dice testing machine. Can you help me?

Louis Zocchi of Gamescience

I believe timothyweber.org is the Timothy Weber you’re interested in. He has a contact form on his website.

Thanks for the reminder about Timothy; it looks like he did some new work late last year that I’ll need to catch up on!

Laurke suffers from the delusion that all of my dice are shatter proof. All of my gem dice were made from the same expensive plastic used to make eye glasses. We chose this material because of its high luster and clarity. If hit with a hammer, they will shatter. However, My opaque dice were made with HIGH IMPACT plastic, which will survive the impact of a hammer. While attending an MDG convention in Detroit back in l986, a customer picked up an Armory D-30 and handed it to Max Lipman. He told Max the die had a crack in it. Max put the die back out for sale and stated that it would function O.K. The customer handed the die back to Max and pointed out its crack again. Max put it back out for sale. The customer pulled it out again and said that the crack made it defective. Max said it was O.K. and to prove his point, Max put it on the concrete floor and slammed it with a hammer. After it shattered, Max said, well that one was cracked but this one is not. He hammered a new 30 sided die, which shattered like the lst one. Max then handed the kid several dollars and told him to buy some Gamescience dice and bring them back to him. While en-route to my booth, Max got on the exhibit hall microphone and announced that the Armory was sponsoring a dice testing contest. Everyone who made dice was invited to bring their dice over to see if they could survive Maxes hammer. Heritage models and several other dice vendors, quickly packed up and left the hall. The dice buyer told me Max had sent him down to buy dice for testing and he asked me which of them should he take. I let him make is own choices. Lucky for me he picked my opaque 12 siders for the test. After the crowd gathered around Max, he put one of his opaque D-30’s on the floor and shattered it with his hammer. The crowd jeered. Then max said, wait until you see the Gamescience dice.

He put one on the floor and slammed it with his hammer. The die streaked out from under his hammer and ricocheted off of the far wall. They brought it back and discovered that it looked fine. Max hammered it again and it streaked out and ricocheted off of the other wall. After it was brought back and showed no scar, Max gave it another mighty whack which caused the die to ricochet off of the floor and lodge in the overhead light fixture which was 20 feet above. When He complained that the die kept slipping out from under the hammer, the dice buyer pulled a pliers out of his back pack and volunteered to hold the die with them. Max gave the pliered dice a mighty slam and hit the kids hand. The kid was in great pain, but he said, my left hand is strong enough to hold the die, and so he did. Max gave the die another mighty slam, but it showed no damage and the crowd cheered. Red faced, Max hit the pliered die with everything he had, but the die showed no damage and the crowd cheered again. Max slammed his hammer into the die a 3rd time and this time there was an imprint of the hammer on the die, but it did not shatter. I’ve though about ways I could replicate this event at conventions, but the danger of shattered dice pieces from competitors dice, could hit bystanders and cause injury. Although I have not done a slamming test on all of the dice available today, I suspect that they would shatter. If one of you performs a slamming test, please tell the rest of us your findings.

Mr Zocchi,

Sorry I missed your post by almost a year, but you could easily make a box of tough plexiglass with a spring loaded or pneumatic hammer inside that you trigger externally. Then you load the dice in the box, close it, trigger the hammer and any shards bounce harmlessly inside the box.

Someone wants to know if the weight of clear nail polish, will change die roll performances. If someone has the time and interest, I suggest the following. Ink 10 digits all in the same hemisphere, and leave the other 10 digits plain. Ink 10 digits all in the same hemisphere and cover them with clear nail polish. Because wax is heavier than ink, on a 3rd D-20, wax fill 10 of the digits all in the same hemisphere and leave its opposite hemisphere plain. Wax fill another 10 digits all in the same hemisphere and coat them with clear nail polish.

To make this test more significant, I suggest the tester make 3 or 4 copies of each test item to see if each version provides the same outcome. If someone does this, please print your findings here.

I have discovered that Sharpie makes a white inking pen which is water based. It has an extra fine point plastic tip, which stays sharp. If you make a mistake with this pen, just rub it off with your finger tip. Because it is water based, it is easy to clean off your hand. When the pen seems to run out of white, remove its tip and fill the gap with water. Then push a tooth pick into the water filled tip and push down. The toothpick will press a flat stopper, which allows the water into the mixing chamber. Put the point back into the barrel and put its cap on, before shaking to max the fresh water with unused pigment in side the paint chamber. So far, I have put water into my pen 5 times to bring it back to usability. You’ll probably have to go to an office supply store to get one. Its stock # is 7l641 35574. They cost about $4 and white paint based pens are also available as stock # 7l641 35531. There is an assortment of colors available in both styles. When your paint pen has no paint left, pull out the tip and fill the void with lighter fluid. Use a tooth pick to depress the trap inside the empty tip area. After the lighter fluid enters the pigment area, replace the tip, cap the pen and shake. I think it can be resuscitated 3 or 4 times. Good Luck. Louis Zocchi

Pingback: Bristol Vanguard | My New Rolls

I made a die-rolling machine and tested 19 d20s across five brands: http://markfickett.com/dice#geometry . This post was part of my inspiration, so thanks! I didn’t read the comment thread carefully enough before, but I also sent a message to Timothy Weber who seems to have done a very similar project ( http://timothyweber.org/dieroller ).

One interesting result (http://markfickett.com/dice#geometry): I compared a geometric analysis with experimental results from rolling d20s about 3000 times. For the dice that were less fair (Crystal Caste with 40% deviation per side) there was a clear correlation, but it wasn’t as clear for Koplow or Chessex, and there was no clear match between side-measurements and roll outcomes for the fairer or the two Game Science d20s I tested.

Art Quarterly is perhaps the best as far as erudition and depth are concerned.

Enter into the picture Kondoit Art which is handling the Art History 2014 mural project for

the stadium and working in conjunction with FMMS and the

National Trust. Testimonials represent the guide book as brief and fairly poorly organized,

and in addition explain that you’ll want to study it thoroughly to comprehend it.

I have checked your site and i have found some duplicate content, that’s why you

don’t rank high in google’s search results, but there is a tool that can help you to create

100% unique articles, search for: Boorfe’s tips unlimited content

Hello admin, i’ve been reading your articles for some time and I really like coming back here.

I can see that you probably don’t make money on your site.

I know one simple method of earning money, I think you will like it.

Search google for: dracko’s tricks

I see you don’t monetize your blog, don’t waste your traffic, you can earn additional bucks every month.

You can use the best adsense alternative for any type of website (they

approve all websites), for more details

simply search in gooogle: boorfe’s tips monetize your website

Hello Alan! You have given the good info about how true are your d20s? Thanks for sharing.

I have noticed you don’t monetize 1000d4.com,

don’t waste your traffic, you can earn extra cash every month with new monetization method.

This is the best adsense alternative for any type of website (they approve

all sites), for more info simply search in gooogle: murgrabia’s tools

It’s fascinating how detailed this investigation into dice irregularities is!

Wow, this is an impressively thorough analysis! It’s fascinating to see how much thought and measurement went into comparing dice quality definitely makes me appreciate the craftsmanship behind GameScience and Koplow dice even more.

Really interesting deep dive—love how it goes beyond the classic claims from Lou Zocchi and actually backs things up with measurements. The mix of visual stacking and caliper data makes the conclusions feel much more grounded, even if it’s not perfect science. Definitely makes you think twice about how “random” your dice really are.

Interesting experiment. A lot of players assume all dice roll fairly, but small imperfections can definitely influence results over time. It’s cool seeing someone actually test the randomness instead of just relying on assumptions.

Really interesting post. I never realized how much variation there could be with dice balance and randomness until reading this. The testing process and explanations made the whole topic surprisingly engaging, especially for anyone who enjoys tabletop games.